Anthropic Just Proved Why Agent Operating Systems Matter

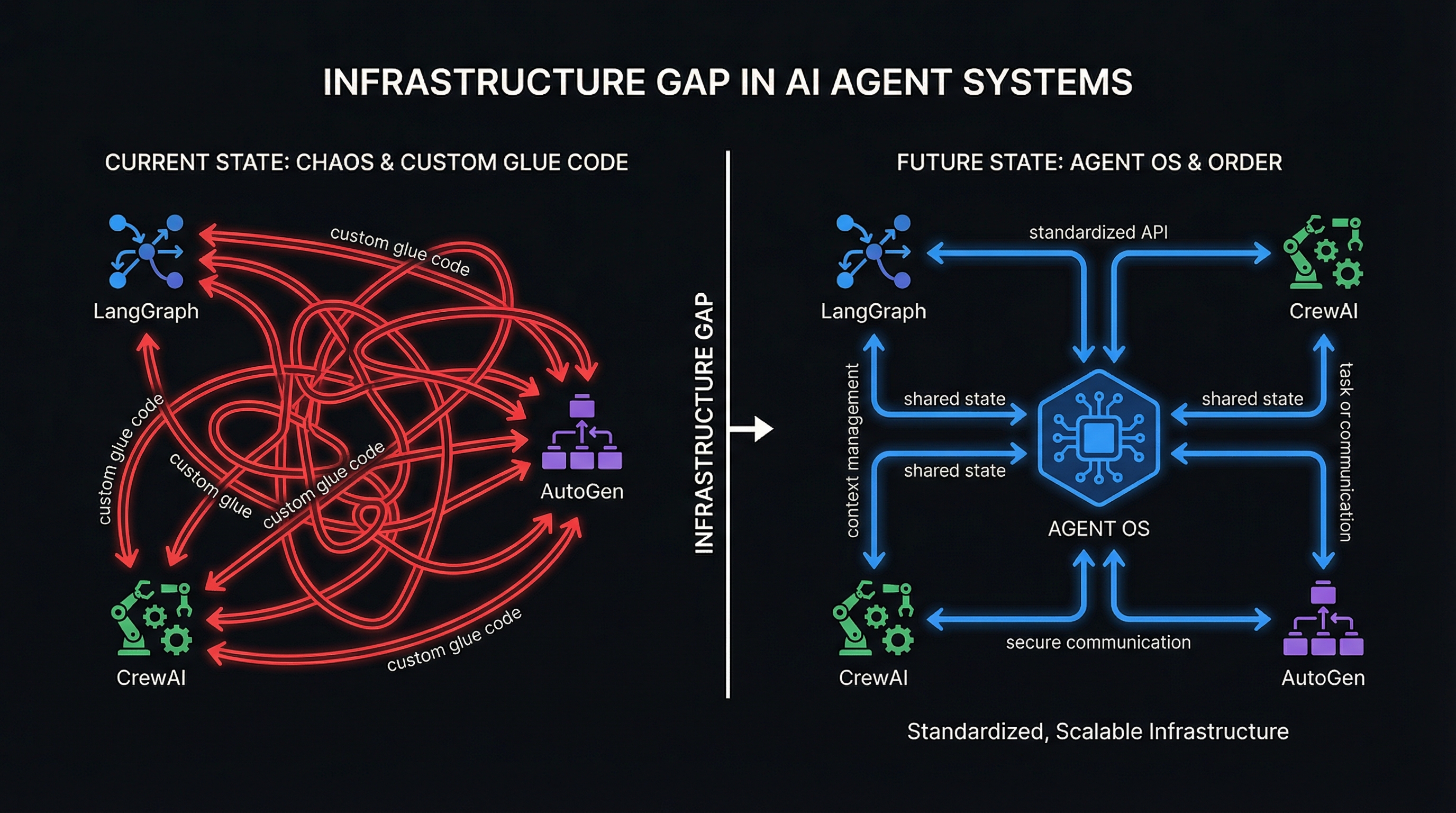

Claude Managed Agents launched yesterday. Here's what it means — and why the open source alternative matters more than ever.

Yesterday, Anthropic launched something that validates what we have been building for months.

Claude Managed Agents is a fully managed runtime for AI agents — sandboxing, checkpointing, credential management, tracing, and multi-agent coordination, all hosted on Anthropic's infrastructure. It is, by any reasonable definition, a cloud-hosted agent operating system. And the fact that the company behind Claude decided this was their next big bet tells you everything about where the industry is heading.

The prototype-to-production gap for AI agents is the defining infrastructure problem of 2026. Anthropic just said so with their product roadmap. We said so six months ago with our research. The difference is in the approach — and that difference matters.

What Claude Managed Agents Actually Is

Let us be precise, because precision matters when analyzing infrastructure.

Claude Managed Agents is built around four core abstractions: Agent (the model configuration, system prompt, tools, and MCP connections), Environment (an isolated cloud container with pre-installed packages and network rules), Session (a running instance that persists for hours, surviving disconnections), and Events (a real-time SSE stream of every action the agent takes).

The platform handles the hard parts that kill agent projects in production: secure sandboxing so agents cannot leak credentials or destroy infrastructure, session checkpointing so multi-hour tasks survive container crashes, scoped permissions so agents stay within their authorized boundaries, and end-to-end execution tracing for governance and debugging.

The pricing is consumption-based: $0.08 per session-hour of active runtime (idle time excluded), plus standard Claude token rates, plus $10 per 1,000 web searches. Prompt caching can reduce token costs by up to 90%.

Early adopters include Notion (workspace delegation), Asana (task coordination), Sentry (autonomous debugging), Rakuten (7-hour coding sessions), Atlassian (third-party agents in Confluence), and Vercel (developer tooling integration). These are serious companies running serious workloads.

This is not vaporware. This is production infrastructure, and it is well-engineered.

The Problem It Solves

Every team that has tried to move an AI agent from demo to production has hit the same wall. The model works. The prompt works. The tool calls work. Then you need sandboxing, and that is three months of container engineering. Then you need checkpointing, and that is another month. Then credential management, audit logging, crash recovery, multi-agent coordination — each one a separate infrastructure project.

Anthropic's own estimate is that Claude Managed Agents reduces development time by up to 10x, taking teams from prototype to production in days rather than months. Based on the architecture — the strict separation of brain (LLM), hands (sandbox), and memory (session log) — that claim is credible. They report a 60% reduction in median time-to-first-token and over 90% at the 95th percentile.

The engineering is sound. The abstractions are clean. For teams that are already committed to Claude as their model provider and comfortable with cloud-dependent infrastructure, this is a strong offering.

But that conditional — "already committed to Claude" and "comfortable with cloud-dependent" — is exactly where the story gets interesting.

The Comparison: Managed vs. Open Source

Here is an honest comparison between two approaches to the same problem:

Claude Managed Agents is a closed-source, cloud-hosted runtime. It runs Claude models exclusively. Your agents execute on Anthropic's infrastructure. You pay per session-hour plus tokens. The abstractions are clean but opinionated — four primitives, Anthropic's way.

Qualixar OS is an open-source agent operating system. It supports 10+ model providers (Claude, GPT-4, Gemini, Llama, Mistral, and others through a universal command protocol). It runs locally on your machine or your own infrastructure. The runtime cost is zero — you pay only for the model API calls you choose to make. It implements 12 execution topologies (pipeline, parallel, voting, debate, tournament, and seven more), giving you architectural flexibility that no single-vendor platform can match.

The differences are structural, not cosmetic:

| Dimension | Claude Managed Agents | Qualixar OS |

|---|---|---|

| Model support | Claude only | 10+ providers, any model |

| Execution | Anthropic cloud | Local-first, self-hosted |

| Runtime cost | $0.08/session-hour | $0 |

| Topology | Single-agent + multi-agent preview | 12 topologies with full execution semantics |

| Source | Closed | Open source (Elastic License 2.0) |

| Data residency | Anthropic's servers | Your machine, your rules |

| Vendor lock-in | High | None |

Neither approach is universally superior. They solve different problems for different contexts.

Why You Need Both (Not Either/Or)

The mature position is not "managed vs. open source." It is "managed AND open source, deployed where each makes sense."

Use Claude Managed Agents when you need zero-ops cloud deployment, your team is already standardized on Claude, you want Anthropic to handle sandboxing and credential vaults, and your data governance policies permit cloud execution. For SaaS companies building Claude-powered features for their customers, this is the right choice. Notion and Sentry are making smart bets.

Use Qualixar OS when you need multi-model flexibility (because model leadership changes quarterly), your data cannot leave your infrastructure (regulated industries, government, healthcare), you need execution topologies beyond simple agent loops (debate, tournament, voting — patterns that emerge in complex decision-making), or you need to avoid per-session runtime fees at scale.

The real power comes from running both. Use Managed Agents for cloud-facing customer workloads where Anthropic's sandboxing and enterprise governance add genuine value. Use Qualixar OS for internal tooling, research pipelines, and any workflow where model diversity or data sovereignty is non-negotiable.

We use Claude every day. This blog post was written with Claude. Qualixar OS supports Claude as a first-class model provider. Acknowledging that Anthropic built something good does not diminish the case for open alternatives — it strengthens it. Managed infrastructure works best when there is an open-source counterpart keeping the ecosystem honest.

The Bigger Picture: The Agent Infrastructure War Just Started

Anthropic is not alone. Google has been building agent infrastructure into Vertex AI. OpenAI shipped the Agents SDK. Salesforce has Agentforce. Microsoft is embedding agent runtimes into Azure AI Foundry and Copilot Studio. Every major platform company has concluded the same thing: the model is not the product. The runtime is the product.

This is the "AWS moment" for AI agents. In 2006, you could run servers yourself or use Amazon's managed infrastructure. Both survived. Both thrived. The companies that won were the ones that understood when to use which.

The agent infrastructure market is following the same pattern. Managed runtimes will capture the convenience segment. Open-source runtimes will capture the sovereignty, flexibility, and cost-optimization segments. The boundary between those segments will shift over time, but the coexistence is permanent.

What matters now is that the category is validated. When Anthropic, Google, OpenAI, and Salesforce are all building agent operating systems, the question is no longer "do we need agent infrastructure?" The question is "which agent infrastructure, deployed where, for which workloads?"

What Comes Next

We published the Qualixar OS architecture as a research paper on arXiv (arXiv:2604.06392) precisely because we believe agent operating systems should be studied, not just sold. The paper covers the 12 execution topologies, the universal command protocol, the bootstrap architecture, and the design decisions that make model-agnostic orchestration possible.

Anthropic launching Claude Managed Agents does not change our roadmap. It confirms it. The prototype-to-production gap is real. The need for agent runtime infrastructure is real. And the need for an open-source, model-agnostic, local-first alternative is more real than ever.

The agent infrastructure war just started. We intend to compete on openness, flexibility, and research rigor.

If you want to understand the architecture behind an open-source agent OS, read the paper. If you want to see the product, visit qualixar.com. If you want to run it, the code is on GitHub.

The future of AI agents is not one runtime. It is many runtimes, interoperating, each earning its place by solving real problems for real teams. Yesterday, Anthropic proved that. Today, we build.

This post is about qualixar-os→